Quantum field theory (QFT) is the main tool we use to understand the fundamental particles and their interactions. It also appears in the context of condensed matter physics, as an effective description. But it is unfortunately also a notoriously difficult subject: first because it is tricky to define non-trivial instances rigorously (it’s not known for any one that exists in Nature), and also because even assuming it can be done, it is then very difficult to solve to extract accurate predictions.

There is a subset of QFTs where there is no difficulty: free QFTs. Free QFTs are easy because one can essentially define them in a non-rigorous way first, physicist style, then “solve” them exactly, and finally take the solution itself as a rigorous definition of what we actually meant in the first place. Then, to define the interacting theories, the historical solution has been to see them as perturbations of the free ones. This comes with well known problems: interacting theories are not as close to free ones as one would naively think, so the expansions one obtains are weird: they diverge term by term, and if the divergences are subtracted in a smart way (renormalization), the expansions still diverge as a whole.

This has not been such a terrible problem historically because in the regime where we observe it, the most important force between particles, the electromagnetic force, is “weak” in the sense of perturbation theory, and thus perturbative expansions still give accurate results at least at reasonably low energy. Renormalizing term by term and truncating the series to the first few terms gives numbers that match what is measured, at least for what can be probed with particle accelerators fitting on earth. Then the problem that maybe the theory itself does not exist is evacuated as secondary. However, for the strong force, described by quantum chromodynamics (QCD) this does not work. Perturbation theory is inaccurate at least at low energy, where quarks get strongly bound and are not approximately non-interacting. So it is difficult to make computations, and as a result the question of whether or not it is even possible in principle to define the theory becomes important: if you can no longer compute stuff, you are not as sure that the object you manipulate even makes sense. In fact, one of the Millenium prizes of 1 million dollars will be awarded to whoever can define a quantum field theory like QCD rigorously. It’s probably the most difficult way to make 1 million dollars.

So, to summarize, there exist specific models of quantum field theories like quantum chromodynamics, that are useful in the real world, that we are more or less convinced are right (in some regime, the model predicts exactly what is observed) but that we don’t know how to define rigorously and for which the standard computation methods (which we could potentially use in a yolo fashion) don’t work.

Now we get to what I find interesting and perhaps insufficiently mentioned usually: this problem of QFT has been attacked from two completely different angles, by two different communities, and with a radically different philosophy. In a nutshell the philosophies are:

- Make the problem simpler, hoping the tools you build to define and solve the simple instances are more or less generic, and work your way up to real QCD.

- Make the problem more complex, so that it can miraculously be solved exactly thanks to the extra structure, and progressively reduce or deform the structure to get to real QCD.

I won’t hide that I prefer the first approach: it’s the one I am most interested in and to which I very modestly tried to contribute recently. However, the second one deserves to be presented because I think it is paradoxically the most mainstream, at least in terms of fame of those who defend it, and perhaps the most surprising. It’s one that illuminates best the mindset of theoretical physicists and high-energy physicists in particular.

At first sight it seems weird that making things more complex makes the problem solvable. But I think it’s a quite standard pattern in theoretical physics, at least in the last century. Typically you start from a simple non-trivial model. You realize that the things you would want to compute can be expressed as a sum of infinitely many complicated terms. It is hopeless, too generic, almost random. Then, you complexify the model to add “structure” in such a way that this infinite mess orders itself into a few simple terms, through magical cancellations, or because all the terms now make a geometric series. At the same time you can use additional structure to cure divergences in a neater way than with standard renormalization. The most famous extra structure is supersymmetry, but in lower dimensions (1+1 instead of 3+1) there is also an infinite family of quantum field theories precisely designed to make the difficulty go away (the so called “integrable” QFTs).

The idea of this approach is to reproduce the “exactly solvable free field theory” + “perturbation theory” miracle, but this time with new candidates for the starting points, exactly solvable theories. As for free field theories, exactly solvable theories can be defined (more or less rigorously) implicitly through their solutions, so this problem seems dealt with as well. This approach has mesmerized physicists: the infinite richness of exactly solvable theories has provided nice qualitative understanding of some weird phenomena like quark confinement. However, I think it will not go beyond that, because we see in these models only the structure we put in. This ordering, this simplification of the mess is precisely what does not happen in most of the real quantum field theories we see in Nature.

It’s a bit like trying to learn about mountains and volcanoes by studying the Eiffel tower or the Chrysler building. They are all pointy things going upward, but what we learn from one by studying the other is necessarily limited. Further the Chrysler building has beautiful metallic gargoyles, which end up attracting all the attention. In the same way, the idiosyncrasies of the structured solvable models become the center of attention, an interest on their own, and we end up forgetting what we came here for.

One of the ornaments of the Chrysler building – Norbert Nagel / Wikimedia Commons CC-BY-SA 3.0

A historical hope was that, deep down, Nature was like the Chrysler building, that there was structure all the way down. But there is not much empirical evidence for this belief. It seems the complex models that make things simpler are really man-made, and don’t describe what we see. Now I would say most people in the field no longer use this argument as a defense of the study of highly structured theories, but rather say that these well understood theories give us a window into what really happens in those, more realistic, that we cannot solve. Ultimately however, I think we all get too fascinated by the gargoyles.

The alternative is to really make things simpler, but try to keep the mess that makes the theories what they are. It’s like studying a pile of dirt, then a small hill, in the hope that we will understand mountains one day. Down this road, there is no discovery of rich structure, and no “two birds one shot” miracle of defining the theory through its exact solution. You keep two distinct problems, and you lose the shininess of polished chromium. The first steps were made by the warrior monks of theoretical physicists, the constructive field theorists, starting in the seventies. They focused on defining the theories rigorously, and managed to go up to the self-interacting scalar field in 2+1 dimensions, aka , which is to QCD what Zugspitze is to the Everest.

is not QCD, but it does not cheat, a large part of the mess is still there. But then going beyond this remarkable achievement turned out difficult. The mess of

is maximal is you want to solve it, but if you merely want to define the theory, the difficulty is milder than for QCD. Technically, the theory is superrenormalizable, whereas QCD is just renormalizable. So far no one managed to get results as strong as what was obtained for

while removing the “super”. Unfortunately adding one dimension to the self interacting scalar field, with

, which would constitute the natural next step and bring us to the right number of physical dimensions, is not possible because while renormalizable in the usual sense, it turns out that the theory does not exist. This is a subtle result: while

was long suspected to be ill-defined, the model was definitely slayed by Aizenman and Duminil-Copin only a few months ago.

The constructive field theorists solved part of the problem (the definition), without cheating, but only up to a point. This is a success or a failure depending on how you look at it. To me it’s mostly a success. However, computing what the models predict, not just defining them, remains to be done.

Because of the limitations of the perturbative approach to QCD, the problem of computing its predictions in the strongly coupled regime was tackled by the hyper pragmatic lattice gauge theorists. They went for bruteforce and used the universal bazooka of physics, the Monte-Carlo method, to get pretty good estimates of many quantities of interest (like the masses of composite particles). This is a remarkable achievement. The Monte Carlo method is universal, it thrives in the mess and absence of structure, it works in theory for basically any physics problem. It is the ultimate joker, what to try when all hope is lost. But it is a costly joker. With arrays of supercomputers scattered all around the world, the lattice gauge theorists only managed to reach a limited precision, and increasing the quality of the estimates will require a tremendous increase in computational power.

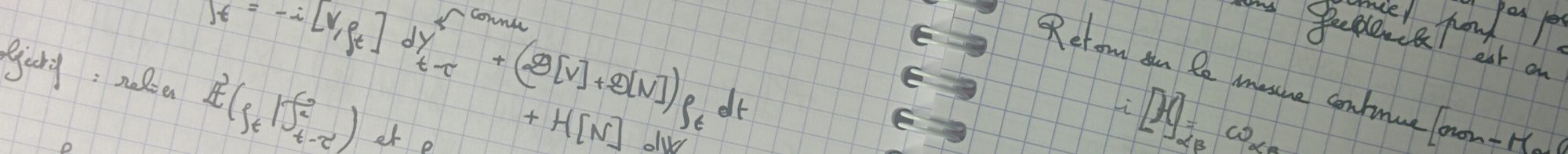

Until recently, Monte-Carlo was the only game in town. It still is if one wants to attack the mountain of QCD directly, but what about the smaller hills the constructive field theorists made sure exist? There was an increase of interest recently, which I mentioned in a previous post. It’s difficult to build new methods directly for QCD, but for simpler theories like which are brutal simplifications, it is manageable. I think it started being interesting when Slava Rychkov and his collaborators managed to beat Monte-Carlo with a method called renormalized Hamiltonian truncation (RHT), for this very simple model. Then the Monte-Carlo people improved their simulations, and beat RHT again. The perturbation theory people pushed their method to the edge by Borel resumming their expansions to order 8, and arrived roughly in the same league. Meanwhile, the tensor network approach, which we develop on in Munich, was used by other groups to reach roughly the same precision as the latest generation Monte-Carlo. Together with Clément Delcamp at the Max Planck Institute, we found this game fun and used the most advanced tensor network renormalization method together with a smarter discretization to obtain slightly more precise results than every one else, at least until another method beats us in the next few months.

Of course, we are falling into the same kind of trap as the gargoyle fans. We image to amazing precision a small hill that is not what we ultimately care about. It’s a good benchmark, since it does not cheat with extra structure, but its relevance is limited because all the methods we use (apart from Monte-Carlo) do not trivially extend to more complicated problems. Recently, the RHT people have boldly attacked , which is basically as far as the constructive field theorists went. We expect our tensor networks to be competitive there as well, but clearly we need to work more for this to happen. Meanwhile QCD remains ruled by the lattice gauge theorists, and will likely remain so for quite some time.

Quantum field theory, in the number of dimensions of the real world (3+1), remains impossible to define and hard to solve away from trivial theories. The high-energy theorists tried to find the answer in structure, to bypass the mess. This is the skyscraper approach. The constructive field theorists tried to make sure the whole enterprise made sense and the theories existed. The lattice gauge theorists attacked the problem head on obtaining results at a massive computational cost. Finally smaller communities started competing to solve the simpler models the constructive field theorists had defined, hoping to one day escape the tremendous computational thirst of Monte-Carlo. I put the last one in the pile of dirt approach. Less shiny for sure, but the mess has to be dealt with.

Very nice. Two questions.

1. “You only live once fashion” ??

2. What about quantum computers?

LikeLike

For the yolo fashion, I just meant like “every term diverges, the series diverge, but who cares, you only live once, let’s take the risk”. I meant Yolo as an invitation to carelessness. If you only live once, why should you care about ill defined steps in computations?

Quantum computers are another interesting route. I know less about the perspectives for lattice gauge theories, that’s why I didn’t mention it, but I know it is quite an active subject (if only at MPQ). As far as I understand, at the end of the day, you will still be sampling observables, and thus at least for simple simulators, I imagine the errors are still decreasing with the square root of the time you put in. So their main use will likely not to be to get 10^{-10} precision on masses (as far as I understand…). But I glossed over an important limitation of Monte Carlo so far: it is difficult to access real time evolution and some parameter regimes (typically with strong chemical potential) are inaccessible because of the sign problem. This is where you expect that quantum computers or alternative classical methods (tensor networks, RHT, …) could do much better than Monte-Carlo. Most papers usually mention this point in the introduction as the main advantage against Monte-Carlo.

LikeLike