I have found a neat nuclear physics problem, and have written a draft of article about it. Below I explain how I came to be interested in this problem, give some context most physicists may not be familiar with, and explain the result briefly.

A while back, I took a small part in the organization of the French public debate on radioactive waste management. My work was not very technical, but got me interested in the rich physics involved. The debate itself didn’t allow me to do real research on the subject: it was certainly not what was asked from me, and there was already so much to learn about the practical details.

After the debate ended, I kept on reading about the subject on the side. In particular, I read more on advanced reactor designs, where I found a neat theoretical question which I believe was unanswered.

I stumbled upon the problem that interested me in the last 6-9 months by reading Sylvain David’s PhD thesis on accelerator driven subcritical reactors (ADSR). Such reactors, popularized by Nobel Prize winner Carlo Rubia, work with a fissile core that is not critical, and where the chain reaction thus dies off exponentially. Before explaining why it is interesting and what questions it brings, I think it makes sense to explain how a standard reactor works.

Standard reactors have to be exactly critical, that is each fission should produce exactly one fission on average. Away from this point the chain reaction dies off or multiplies exponentially fast. It is quite surprising that this sweet spot, where the output power is flat, can be reached in practice. This is doable by exploiting primarily two mechanisms: the existence of delayed neutrons, and the temperature dependence of reactivity. Delayed neutrons explain why the timescales involved are manageable. Such neutrons are not immediately produced by the fission (like prompt neutrons), but by the subsequent decay of fission products. The latter takes place on much longer time scales (seconds, minutes, or more). A standard reactor is slightly subcritical in prompt neutrons alone, and gets critical only with delayed neutrons. The latter are only a small fraction of all neutrons, but this fraction is sufficient to create a narrow band of slow controllable evolution around criticality. This allows a real time tuning of reactivity by operators. In addition, for typical fuel loadings, an increase in temperature tends to reduce reactivity, which has a stabilizing effect.

This all works because of delayed neutrons, which are highly dependent on the type of fuel used. In particular, adding minor actinides (an annoying part of nuclear waste) into the fuel to burn them, tends to reduce the fraction of delayed neutrons. This a priori forbids making a pure waste burner: a critical reactor working with minor actinides as only fissile fuel. The latter would have too few delayed neutrons to be controllable.

One objective of subcritical reactors is to be less dependent on the characteristics of the fuel for safety. Such reactors are subcritical even accounting for delayed neutrons, the chain reaction always decays exponentially fast (and this “fast” is really fast, since the timescale is given by prompt neutrons). For such a reactor to sustain fissions and produce power, it needs to be externally driven by an intense source. The only source that is intense enough, with current designs, is a spallation source driven by an extremely high current proton accelerator. When a fast proton hits a heavy nucleus target like lead, the shock frees neutrons, which can then induce fissions in a subcritical core. Quite surprisingly (at least for me) this is energetically feasible: if the fission energy is turned into electricity, there is more than enough to drive the accelerator and give some to the grid. However, to make electricity production or waste incineration meaningful, the accelerator has to have a proton current that is higher than what can be found off shelf. This makes the accelerator part of such reactors quite expensive, tricky to construct, and potentially unreliable. It’s not the only objection to such designs, and more generally to a waste transmutation strategy, but it is certainly one that makes testing a realistic prototype complex and costly.

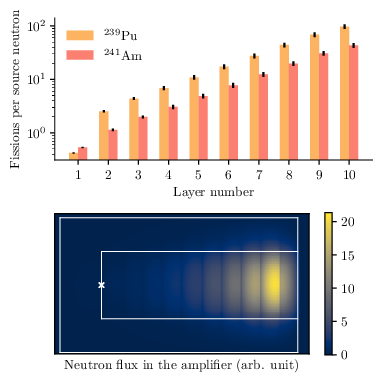

The question of the source requirements for subcritical reactors is really interesting and more subtle than it may seem. The main parameter for a subcritical reactor is its level of subcriticality, which is one proxy for its safety. Typically it is proposed in the range 0.95-0.99, meaning that one neutron injected produces on average and asymptotically 0.95 to 0.99 new neutrons per generation. It also suggests that for one neutron injected by the source, 20 (for ) to 100 (for

) fissions occur in the core. This rule of thumb sets the accelerator requirements for a given fission power output. What is surprising, and that I first discovered in Sylvain David’s thesis, is that there is in fact no rigorous link whatsoever between keff and the source requirements. More shockingly, for a fixed

, say

, it is in principle possible have an arbitrarily faint source produce an arbitrarily large number of fissions. This seems outrageously wrong, which is of course why it got me very curious. What I did in the last months was understanding better how this is possible, and demonstrating that it is doable with physically realistic materials.

The main idea to get is that criticality is an asymptotic property while the number of fissions per source neutron is related to transcients. To be more precise, we need to consider to the average multiplication per generation . The source neutron produces on average

fissions, which then produces on average

fissions, etc. Now there are two important quantities to consider.

The first is the asymptotic multiplication

which tells us about criticality. If the number of neutrons will explode exponentially with a timescale proportional to

. Conversely, if

, then the number of neutron dies out at long times, and

again gives the associated timescale for the decay. This is the metric one needs to look at for safety.

On the other hand, what is crucial for neutron economy and amplification is the total number of neutrons produced

Importantly, N can be very large if the first are larger than 1. One sometimes introduces

, which is what the multiplication per generation

would have had to be to get the same total number of neutrons had it been generation independent. If

is approximately generation independent, then

, but in general it is not the case.

The typical example is a just-barely-subcritical plutonium sphere with a source in the center: clearly the first generations, near the source, can have as the neutrons are likely to meet plutonium nuclei, but $k_i<1$ for the subsequent generations as neutrons are more likely to leak away. This makes it intuitive that

, but does not yet show they can be arbitrarily different.

Typically, if you put together two pieces of fissile material that independently have a close to 1, say

, the pair will become critical, so you can’t just increase multiplication this way. The trick is to create some decoupling in the neutron flux. Imagine you have a perfect neutron diode. Now put the two pieces together and separate them with the diode. If there is a source in the first piece, it will create a few neutrons that will leak away into the second one through the diode, where they will create more fissions and neutrons. However, the neutrons created in the second piece can’t flow back into the first. It is thus clear that the

of the pair is the same as the original. The

is the same, but the multiplication has increased. If we go from 2 to n pieces, the multiplication of the stack is increased exponentially in n while

is constant.

The problem is that there are no perfect neutron diodes in nature. In 1957 people had proposed combining cadmium, a thermal neutron absorber, and a moderator: fissions produce fast neutrons, that pass through the cadmium as if it were transparent, then go through a moderator, and then induce new fissions in a new stage. The neutrons from the second fission stage can go back through the moderator, but are then thermalized and thus get absorbed by cadmium. This produces an approximate diode.

How far can this be pushed? As far as I can tell from carrying a rather tedious review of the literature, not much has been tried. Some physicists have made back of the envelope calculations about what multiplication could be achieved assuming some reflection and transmission coefficients between stages are given. The multiplication reached per stage is huge but of course the result is rather trivial since the assumptions are ad hoc and it’s hard to know if they are realistic (with known materials and reasonable reactor geometry). On the other hand, some physicists have tried to improve existing reactor designs by making them two stage. This is the other extreme: very realistic, since it’s a full reactor model, but the gain is fixed and moderate, because there are only two stages. I wanted to fill the space in between: without making a super realistic reactor, is it possible to achieve arbitrary multiplication with a simple scalable design made of materials that exist? Basically I wanted to be physically realistic, without necessarily being realistic from an engineering perspective.

I found that they were basically 4 physically distinct strategies, 3 being usable if the design is to be scalable (by that I mean that it is possible stack as many stages as one likes). I then dreamed of a design incorporating all 3 strategies, and carried neutron transport Monte-Carlo simulations to see how it fared. Since I thought the results were cool, I wrote them down in an article draft (comments are very welcome, since I am a bit out of my turf).

The multilayer setup I considered

In a nutshell, arbitrary amplification is feasible at fixed , with known fissile materials, reflectors and moderators. This opens the way, in principle, to have subcritical reactors driven by arbitrarily faint sources, even based on radioactive decay. Of course, the “thing” I simulated is far from a complete reactor, and its nice feature (arbitrarily large subcritical amplification) is paid with other safety problems that may be unsolvable. Yet I think it deserves to be explored further and I would like better designs to be proposed. Sure, such fancy designs will probably never be implemented, but from a theoretical point of view they are stimulating. For example, I don’t think it is known how low

can be while allowing arbitrary amplification in a multistage system. In my simulations, I had to keep

quite close to criticality: unless the reactor is taken huge, it is difficult to have

below 0.95 at least with the strategies I manually explored. Could one go as low as 0.8 for example, to make the reactor robust to massive changes in geometry, coolant loss, etc.? How small can a subcritical system amplifying neutrons by a factor

be? Can such subcritical systems be used as pulsed neutron sources, as alternatives to high flux critical reactors?

Number of fissions per stage and neutron flux in the multilayer system I considered

As far as I know, these rather fundamental questions have not been explored much. It is possible that practionners have found a long time ago that there were major drawbacks to multistage systems that I have not seen. People I talked to didn’t know of any show stopper. If anyone of my readers has hints, or wants to explore related questions further, I am of course very interested in discussing.

To summarize, it’s physically possible to have a strictly subcritical reactor of standard power driven by an arbitrarily weak neutron source. This brings a lot of interesting theoretical questions, and makes me wonder what the main drawbacks are. For more, just read the draft, which I hope to finalize after the holidays.